Linear Regression from Scratch

Single and multivariable linear regression built from first principles using only NumPy.

View on GitHubResults

Key Metrics

0

External ML Libraries

NumPy

Only Dependency

2

Implementations (Single + Multi)

Matrix

Solution Method

Approach

Technical Overview

Normal Equation

The closed-form solution β = (XᵀX)⁻¹Xᵀy was implemented directly using NumPy matrix operations. This gives the exact least-squares solution and requires a solid grasp of linear algebra — matrix transposition, inversion, and the geometric interpretation of orthogonal projection.

Gradient Descent

An iterative gradient descent implementation was also built, updating weights by the gradient of the mean squared error at each step. This implementation handles the learning rate, convergence criteria, and loss history tracking that practitioners deal with when closed-form solutions are computationally infeasible.

Why This Matters for Quant Work

Linear regression is the foundation of factor models, risk attribution, and a large portion of quantitative strategy research. Building it from scratch demonstrates that the mathematical mechanics are understood — not just the sklearn API call. Every regularization technique, covariance estimate, and OLS assumption traces back to this foundation.

Gallery

Output & Visualizations

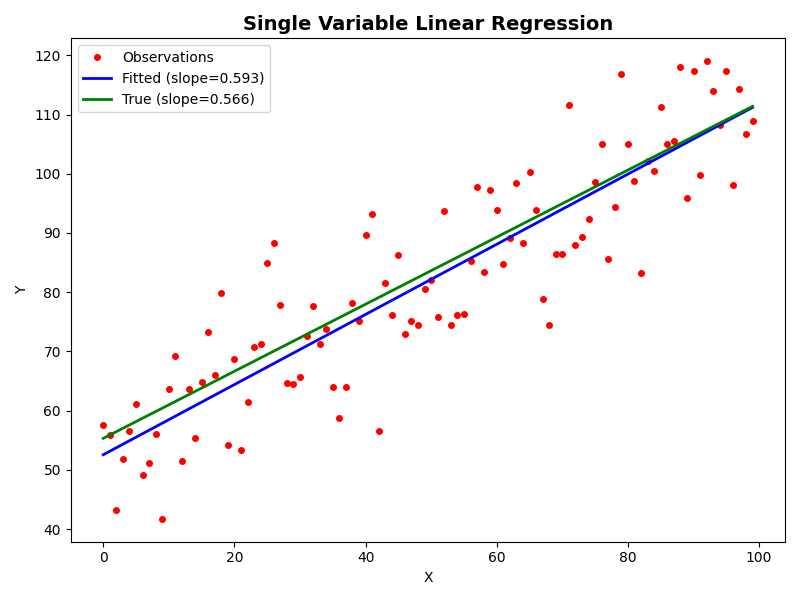

Single Variable Regression Fit — Observed data, fitted line, and true line

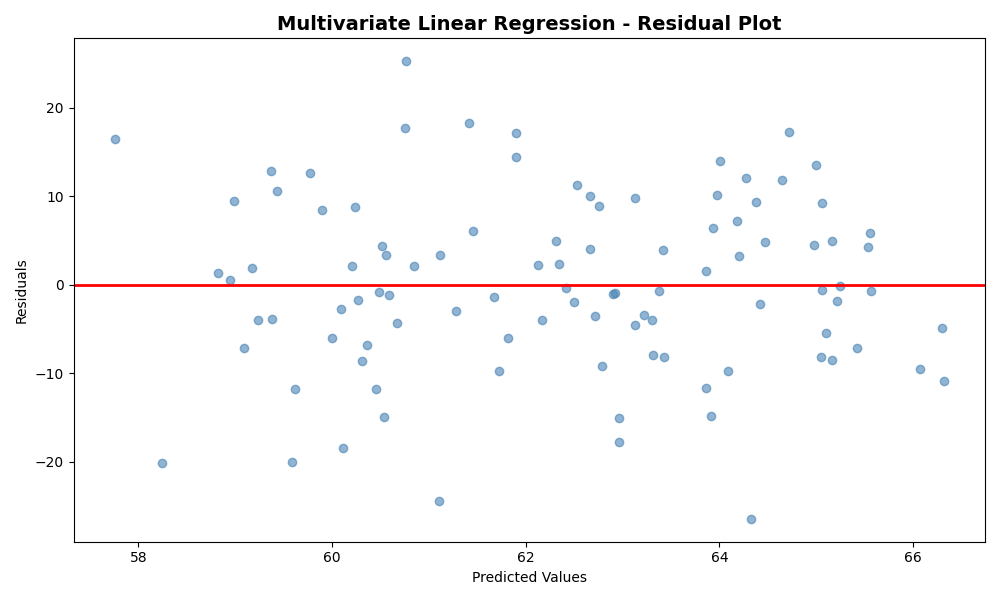

Residual Analysis — Residuals vs. predicted values for multivariate model

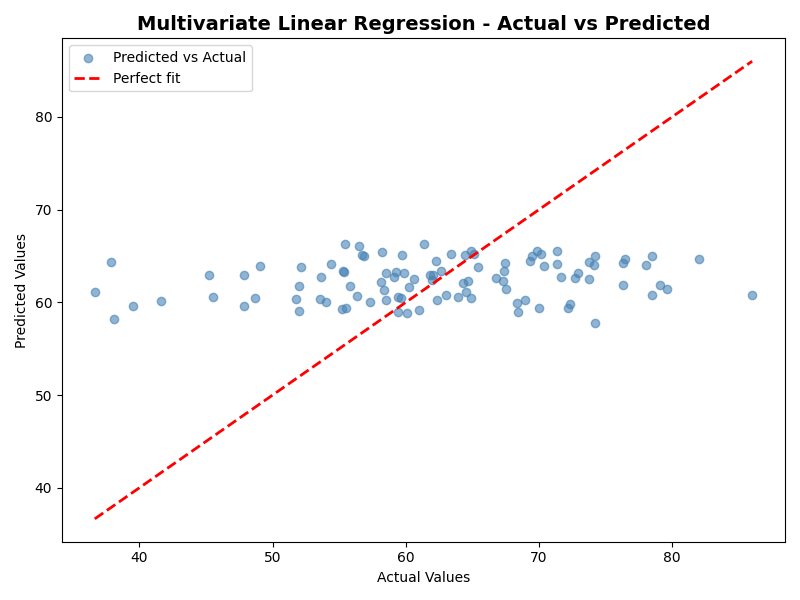

Actual vs. Predicted — Multivariate regression prediction accuracy

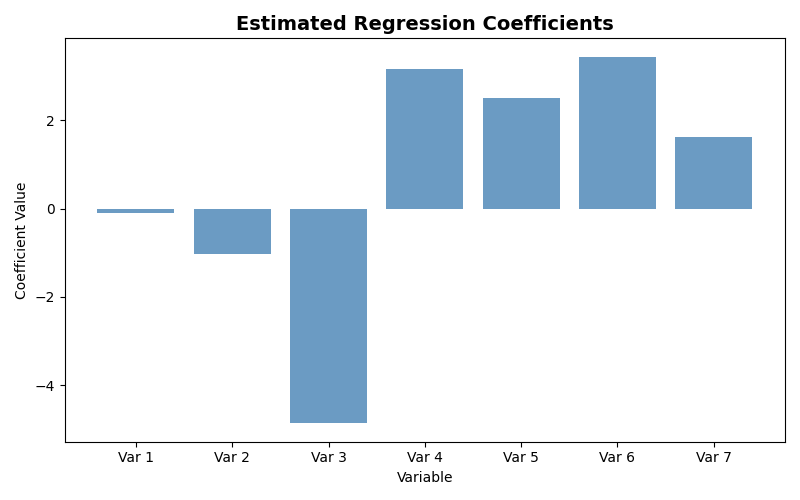

Estimated Coefficients — Bar chart of fitted regression coefficients

Stack